Thank you for the detailed explanation.

I computed the Envelope Correlation (2020) process on a concatenated time window of 379.92 seconds for 68 scouts by selecting 'Envelope correlation (orthogonalized)', 'Time resolution as Dynamic', 'Estimation window length of 20000 ms' with overlap of 50%, unchecked the parallel processing toolbox, and 'average among output files' as outcome options.

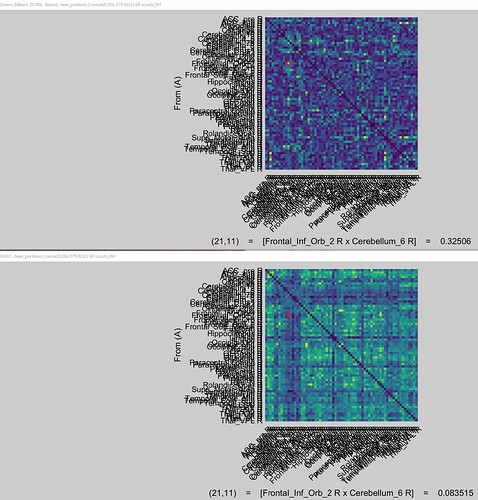

Reading the discussion, I edited the bst_connectivity.m considering the absolute value in both the lines mentioned (for me line 525 and line 536) to see if I was able to reproduce the same results of the HENV process with the old AEC one. Unfortunately, I obtained two different results (figures attached). The upper figure is the HENV process and the bottom one is the old AEC one with the edited absolute value in the code. Can it be because the old AEC process considers averaged measures across the time window? In the results obtained from the HENV process, I kept the 'dynamic' time resolution as suggested in the tutorials and from my understanding in this discussion.

For both processes, I applied the orthogonalization and studied the following frequency bands: delta / 1, 4 / mean, theta / 4, 8 / mean, alpha / 8 12 / mean, beta / 12, 30 / mean, gamma / 30, 70 / mean, broadband / 1, 70 / mean.

Thank you in advance.