Hello,

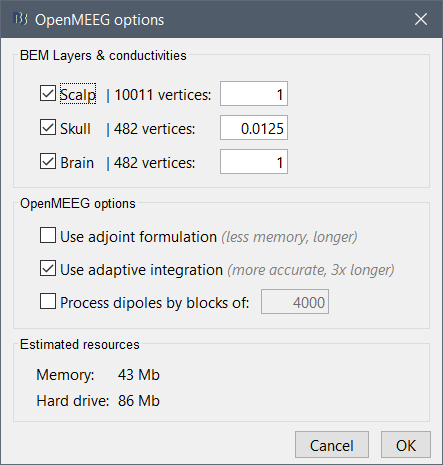

In an effort to quantify errors in forward models (mostly spherical, but I might also look at Nolte's 2003 method), I'm trying to produce an accurate single compartment BEM with a 1 mm edge inner-skull mesh (40k vertices), such that the edge length is of the same order as the minimum distance of sources to the surface, which as I understand is required for accurate modeling of these superficial sources. Unfortunately, OpenMEEG crashes. My latest attempt was on a workstation with 48 GB RAM, and Brainstorm indicates that OpenMEEG would need about 6 GB to run.

First, can you confirm if this error (#137) is memory related? Is there anything I can do to make this work other than reducing the mesh resolution (and therefore the accuracy)? Thanks!

./om_gain -MEG "/export02/data/marcl/temp/openmeeg_hminv.mat" "/export02/data/marcl/temp/openmeeg_dsm.mat" "/export02/data/marcl/temp/openmeeg_h2mm.mat" "/export02/data/marcl/temp/openmeeg_ds2meg.mat" "/export02/data/marcl/temp/openmeeg_gain_meg.mat" 2>&1: Killed

***************************************************************************

** Error: OpenMEEG call: om_gain -MEG

** "/export02/data/marcl/temp/openmeeg_hminv.mat"

** "/export02/data/marcl/temp/openmeeg_dsm.mat"

** "/export02/data/marcl/temp/openmeeg_h2mm.mat"

** "/export02/data/marcl/temp/openmeeg_ds2meg.mat"

** "/export02/data/marcl/temp/openmeeg_gain_meg.mat"

** OpenMEEG error #137:

** ./om_gain version 2.4.1 compiled at Aug 29 2018 08:19:44 using OpenMP

** Executing using 6 threads.

**

** | ------ ./om_gain

** | -MEG

** | /export02/data/marcl/temp/openmeeg_hminv.mat

** | /export02/data/marcl/temp/openmeeg_dsm.mat

** | /export02/data/marcl/temp/openmeeg_h2mm.mat

** | /export02/data/marcl/temp/openmeeg_ds2meg.mat

** | /export02/data/marcl/temp/openmeeg_gain_meg.mat

** | -----------------------

** /bin/bash: line 1: 26801 Killed ./om_gain -MEG "/export02/data/marcl/temp/openmeeg_hminv.mat" "/export02/data/marcl/temp/openmeeg_dsm.mat" "/export02/data/marcl/temp/openmeeg_h2mm.mat" "/export02/data/marcl/temp/openmeeg_ds2meg.mat" "/export02/data/marcl/temp/openmeeg_gain_meg.mat" 2>&1

**

** For help with OpenMEEG errors, please refer to the online tutorial:

** https://neuroimage.usc.edu/brainstorm/Tutorials/TutBem#Errors

***************************************************************************

Edit: Indeed it used much more memory than Brainstorm estimated (can the estimate be improved?):

[Thu Apr 25 05:23:23 2019] Killed process 26801 (om_gain) total-vm:45331880kB, anon-rss:44350760kB, file-rss:1828kB, shmem-rss:0kB

Any suggestions?

but it's not clear how much more I need.

but it's not clear how much more I need.