Machine learning: Decoding / MVPA

Authors: Dimitrios Pantazis, Seyed-Mahdi Khaligh-Razavi, Francois Tadel,

This tutorial illustrates how to run MEG decoding (a type of multivariate pattern analysis / MVPA) using support vector machines (SVM).

Contents

License

To reference this dataset in your publications, please cite Cichy et al. (2014).

Description of the decoding functions

Two decoding processes are available in Brainstorm:

Decoding -> SVM classifier decoding

Decoding -> max-correlation classifier decoding

These two processes work in a similar way, but they use a different classifier, so only SVM is demonstrated here.

Input: the input is the time recording data from two conditions (e.g. condA and condB) across time. Number of samples per condition do not have to be the same for both condA and condB, but each of them should have enough samples to create k-folds (see parameter below).

Output: the output is a decoding time course, or a temporal generalization matrix (train time x test time).

Classifier: Two methods are offered for the classification of MEG recordings across time: support vector machine (SVM) and max-correlation classifier.

In the context of this tutorial, we have two condition types: faces, and objects. The participant was shown different types of images and we want to decode the face images vs. the object images using 306 MEG channels.

Download and installation

From the Download page of this website, download the file 'sample_decoding.zip', and unzip to get the MEG file: subj04NN_sess01-0_tsss.fif

SVM decoding requires the LibSVM library (website). Brainstorm will install install it automatically as a plugin when needed. On Linux and MacOS, you may have to explicitly recompile the library:

- In the Matlab command window, execute "which svmtrain". If it returns an error message ('svmtrain' not found), you need to compile the library manually.

Locate the matlab sub-folder in the libsvm installation folder:

$HOME/.brainstorm/plugins/libsvm/libsvm-master/matlab- In Matlab, go to this folder execute the function "make.m".

- If it doesn't work, read the instructions in the README file in the same folder.

- You need a C compiler to be available on your computer. Mac users can use XCode.

- Start Brainstorm.

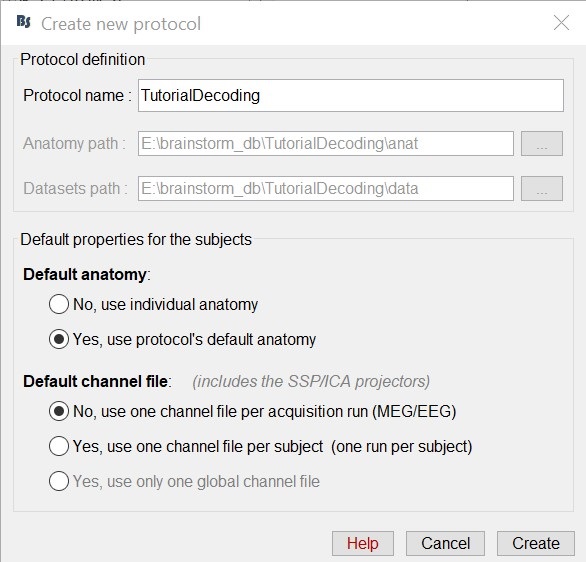

Select the menu File -> Create new protocol. Name it "TutorialDecoding" and select the options:

"Yes, use protocol's default anatomy",

"No, use one channel file per acquisition run".

Import the recordings

- Go to the "functional data" view of the database (sorted by subjects).

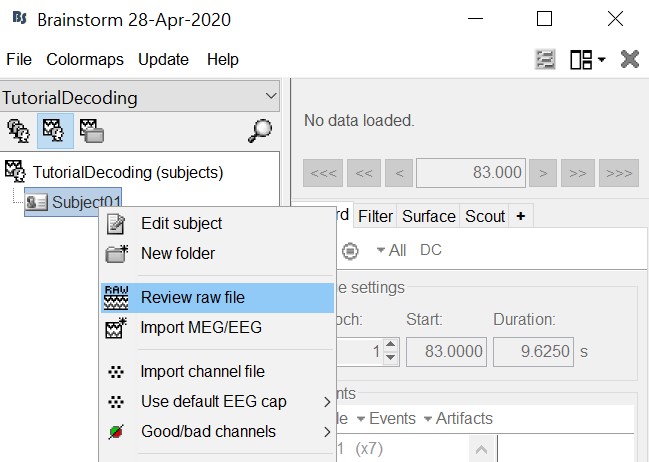

Right-click on the TutorialDecoding folder -> New subject -> Subject01

Leave the default options you defined for the protocol.Right click on the subject node (Subject01) -> Review raw file.

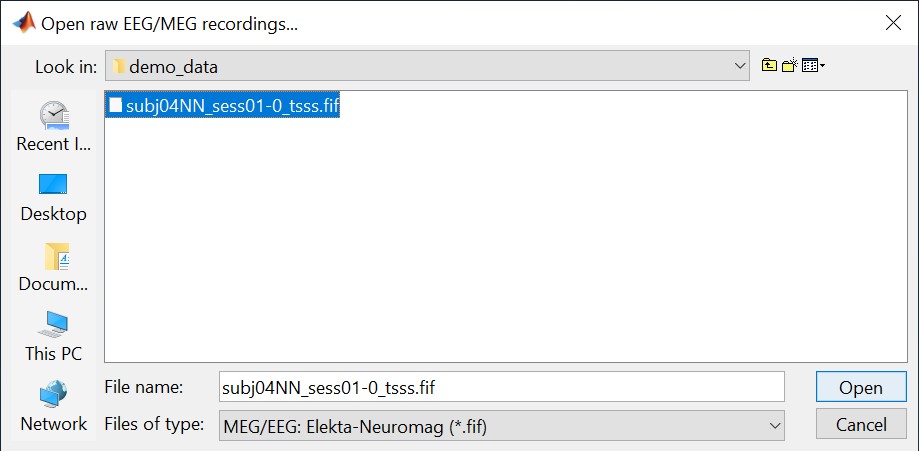

Select the file format: "MEG/EEG: Neuromag FIFF (*.fif)"

Select the file: subj04NN-sess01-0_tsss.fif

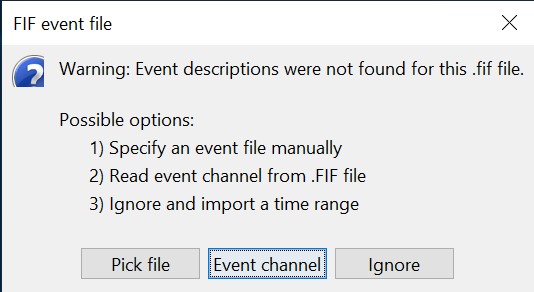

Select "Event channels" to read the triggers from the stimulus channel.

- We will not pay attention to MEG/MRI registration because we are not going to compute any source models. The decoding is done on the sensor data.

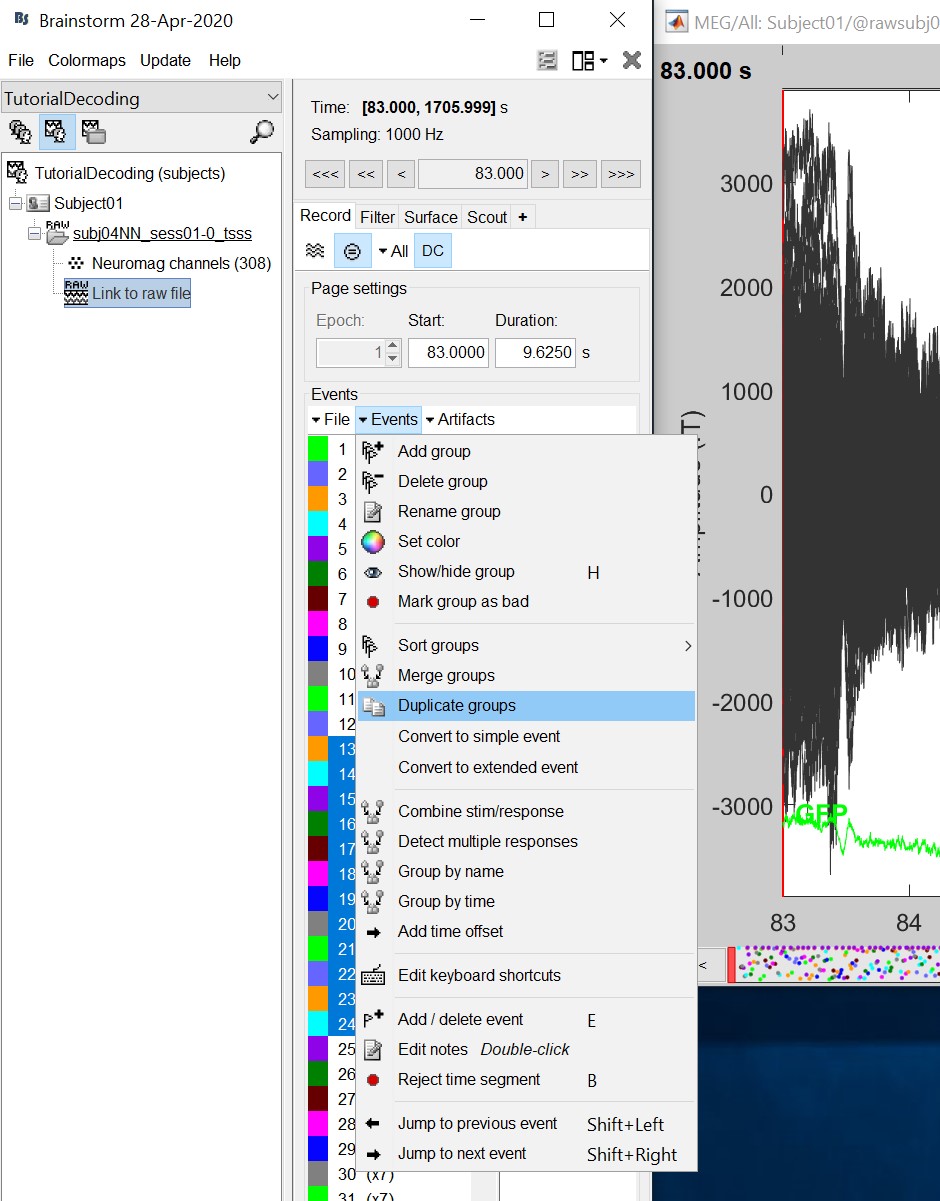

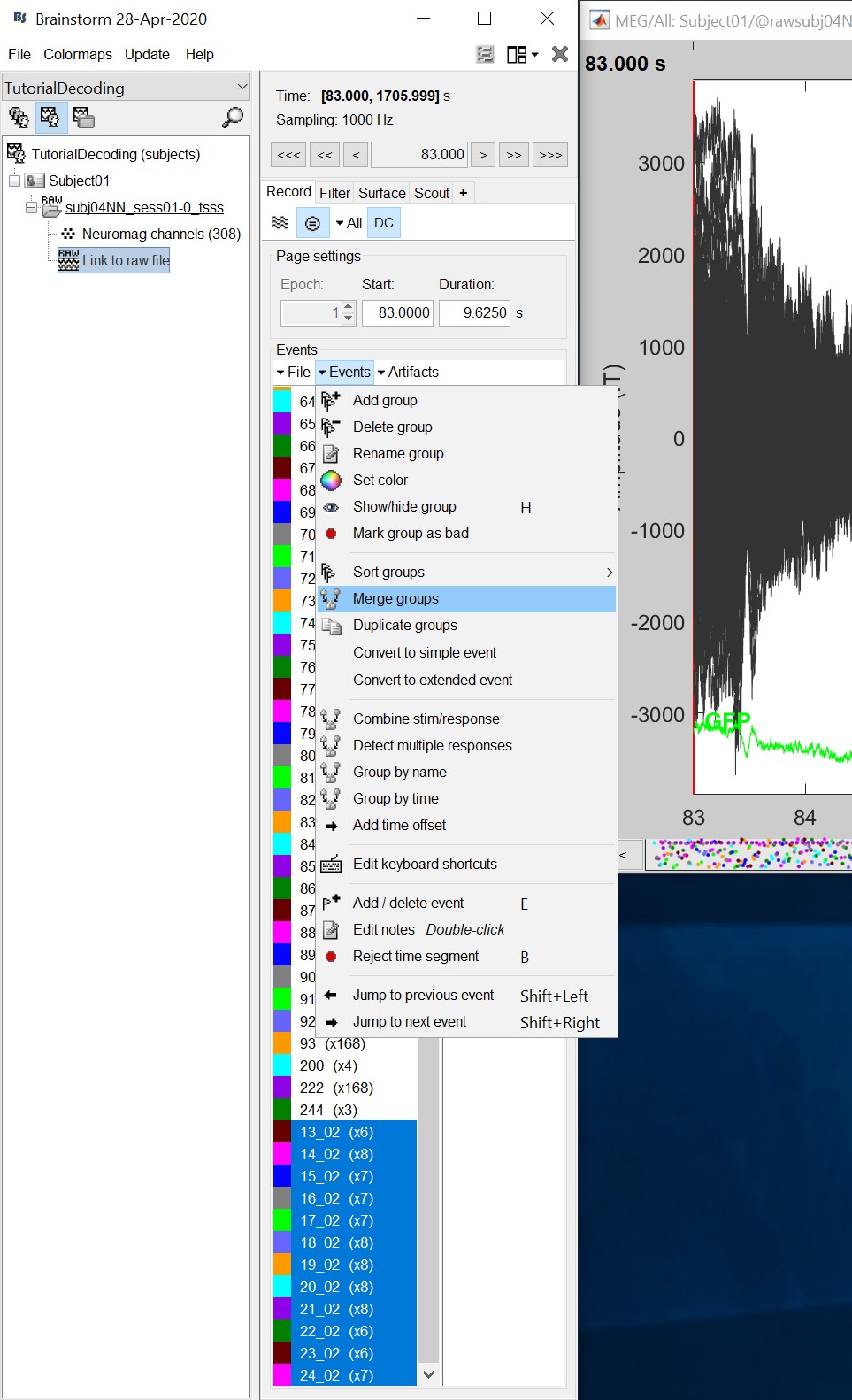

Double click on the 'Link to raw file' to visualize the raw recordings. Event codes 13-24 indicate responses to face images, and we will combine them to a single group called 'faces'. To do so, select events 13-24 using SHIFT + click and from the menu select "Events -> Duplicate groups". Then select "Events -> Merge groups". The event codes are duplicated first so we do not lose the original 13-24 event codes.

Event codes 49-60 indicate responses to object images, and we will combine them to a single group called 'objects'. To do so, select events 49-60 (SHIFT + click) and from the menu select Events -> Duplicate groups. Then select Events -> Merge groups. No screenshots are shown since this is similar to above.

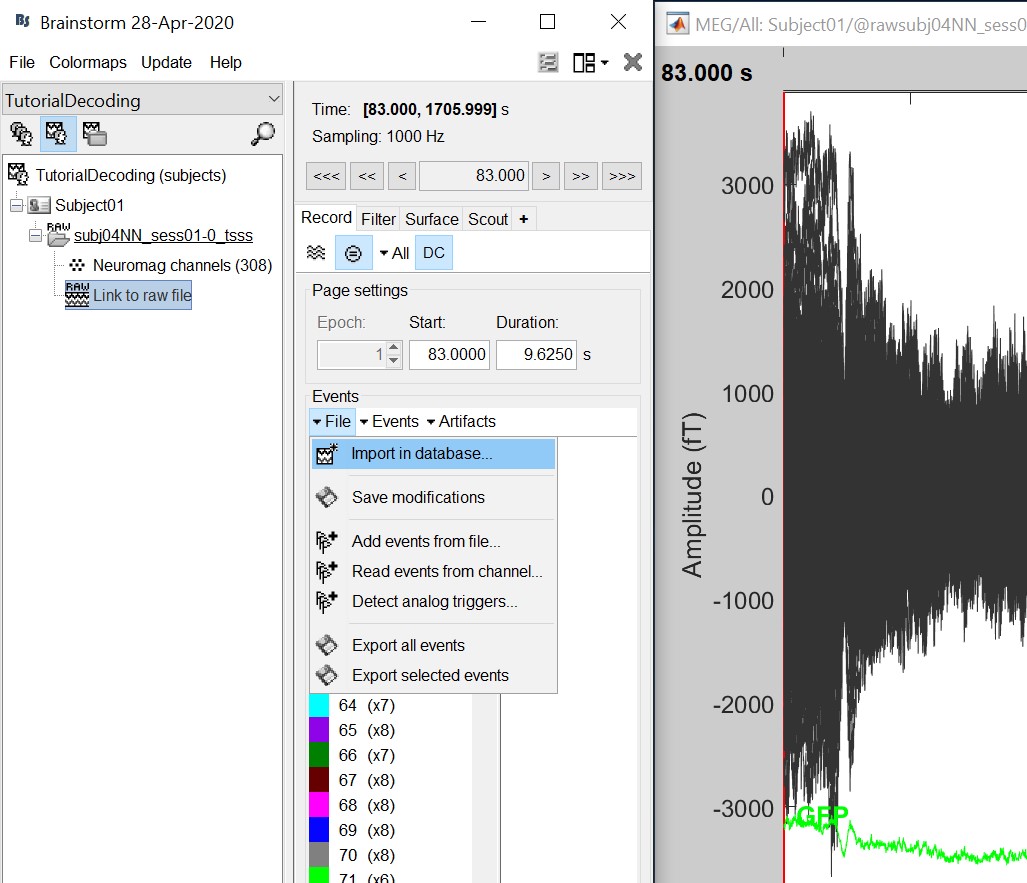

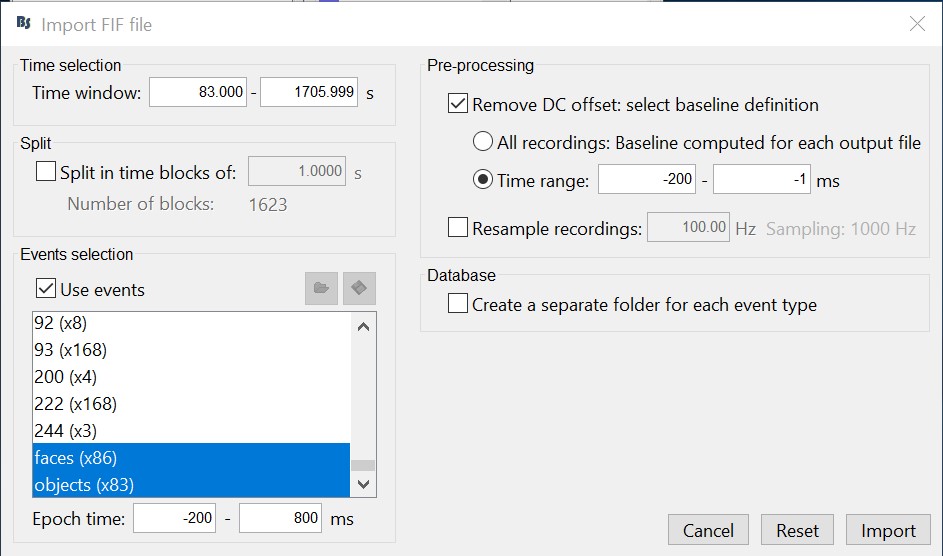

We will now import the 'faces' and 'objects' responses to the database. Select "File -> Import in database".

- Select only two events: 'faces' and 'objects'

- Epoch time: [-200, 800] ms

- Remove DC offset: Time range: [-200, 0] ms

Do not create separate folders for each event type

Select files

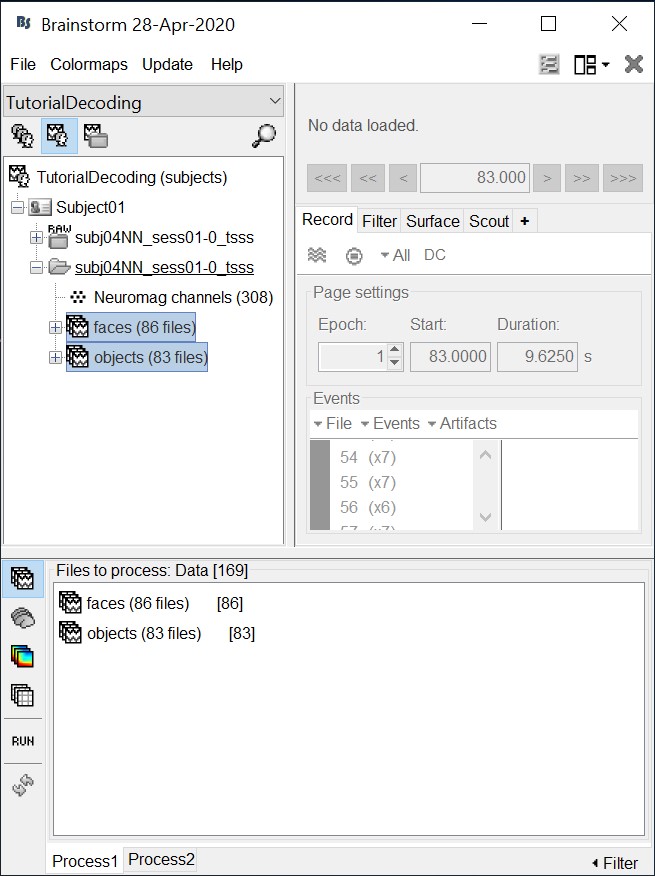

- Drag and drop all the face and object trials to the Process1 tab at the bottom of the Brainstorm window.

Intuitively, you might have expected to use the Process2 tab to decode faces vs. objects. But the decoding process is designed to also handle pairwise decoding of multiple classes (not just two classes) for computational efficiency, so more that two categories can be entered in the Process1 tab.

Decoding with cross-validation

Cross-validation is a model validation technique for assessing how the results of our decoding analysis will generalize to an independent data set.

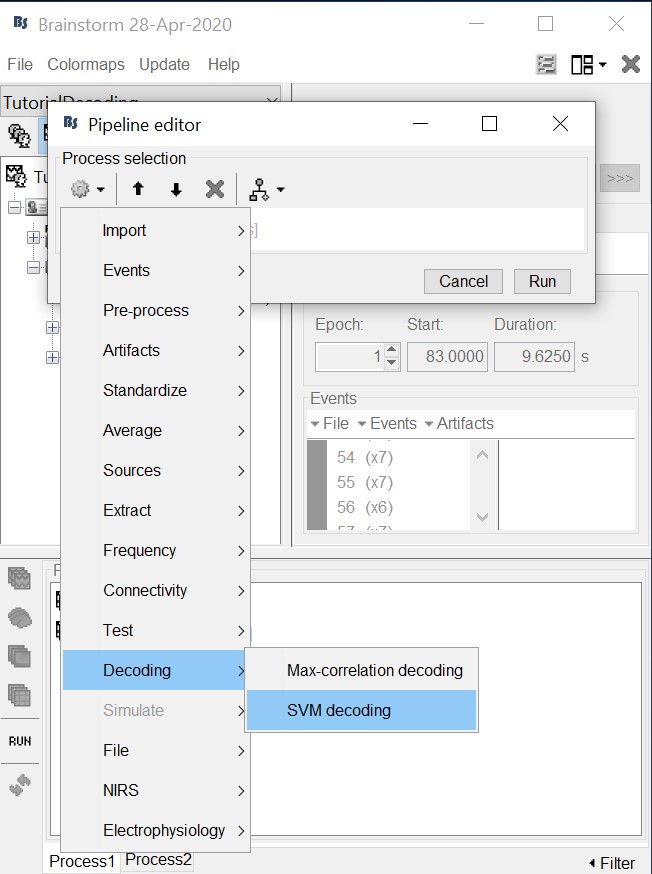

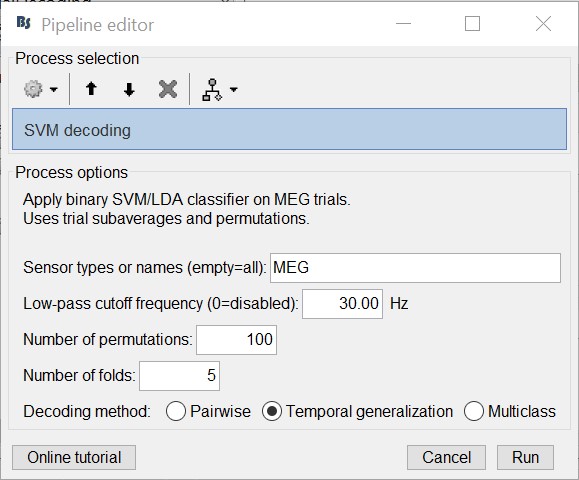

Select process "Decoding > SVM decoding"

Note: the SVM process requires the LibSVM toolbox, see installation instructions above.

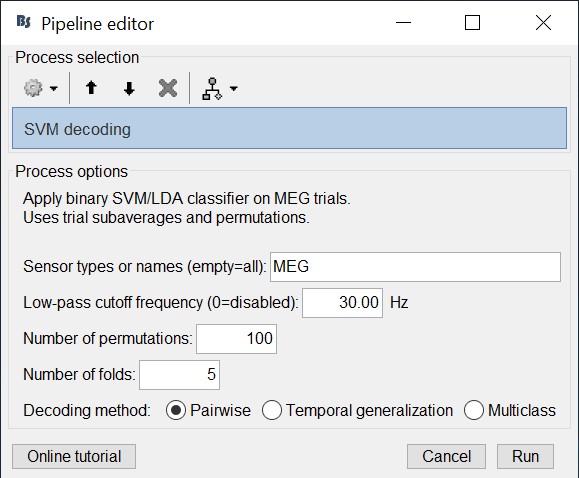

- Select 'MEG' for sensor types

- Set 30 Hz for low-pass cutoff frequency. Equivalently, one could have applied a low-pass filters to the recordings and then run the decoding process. But this is a shortcut to apply a low-pass filter just for decoding without permanently altering the input recordings.

- Select 100 for number of permutations. Alternatively use a smaller number for faster results.

- Select 5 for number of k-folds

- Select 'Pairwise' for decoding. Hint: if more that two classes were input to the Process1 tab, the decoding process will perform decoding separately for each possible pair of classes. It will return them in the same form as Matlab's 'squareform' function (i.e. lower triangular elements in columnwise order)

- The decoding process follows a similar procedure as Pantazis et al. (2018). Namely, to reduce computational load and improve signal-to-noise ratio, we first randomly assign all trials (from each class) into k folds, and then subaverage all trials within each fold into a single trial, thus yielding a total of k subaveraged trials per class. Decoding then follows with a leave-one-out cross-validation procedure on the subavaraged trials.

For example, if we have two classes with 100 trials each, selecting 5 number of folds will randomly assign the 100 trials in 5 folds with 20 trials each. The process than will subaverage the 20 trials yielding 5 subaveraged trials for each class.

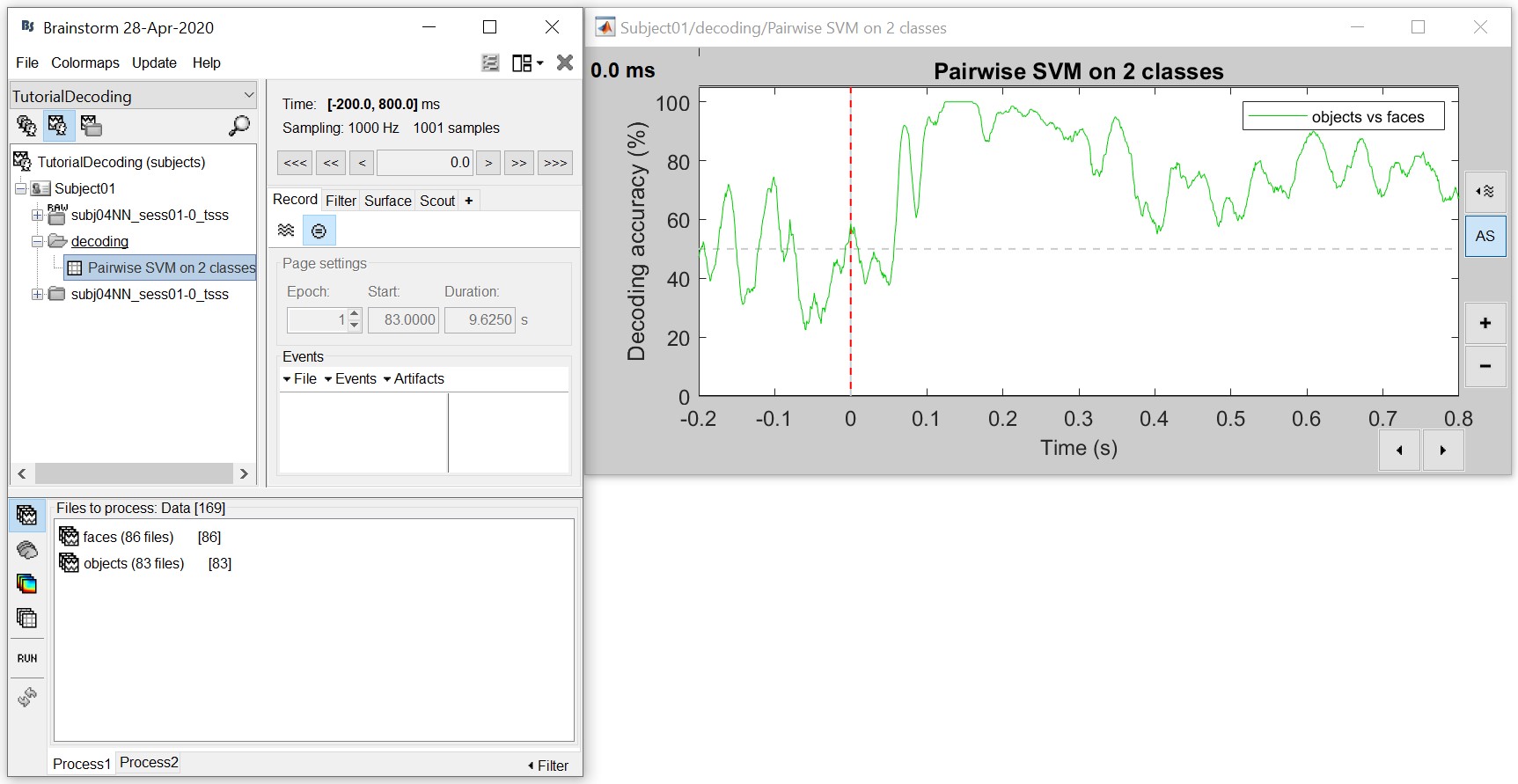

The process will take some time. The results are then saved in a file in the 'decoding' folder

The resulting matrix gives you the decoding accuracy over time. Notice that there is no significant accuracy before about 80 ms, after which there is a very high accuracy (> 90%) between 100 to 300 ms. This tells us that the neural representation of the face and object images vary significantly, and if we wanted to analyse this further we could narrow our analysis at this time period.

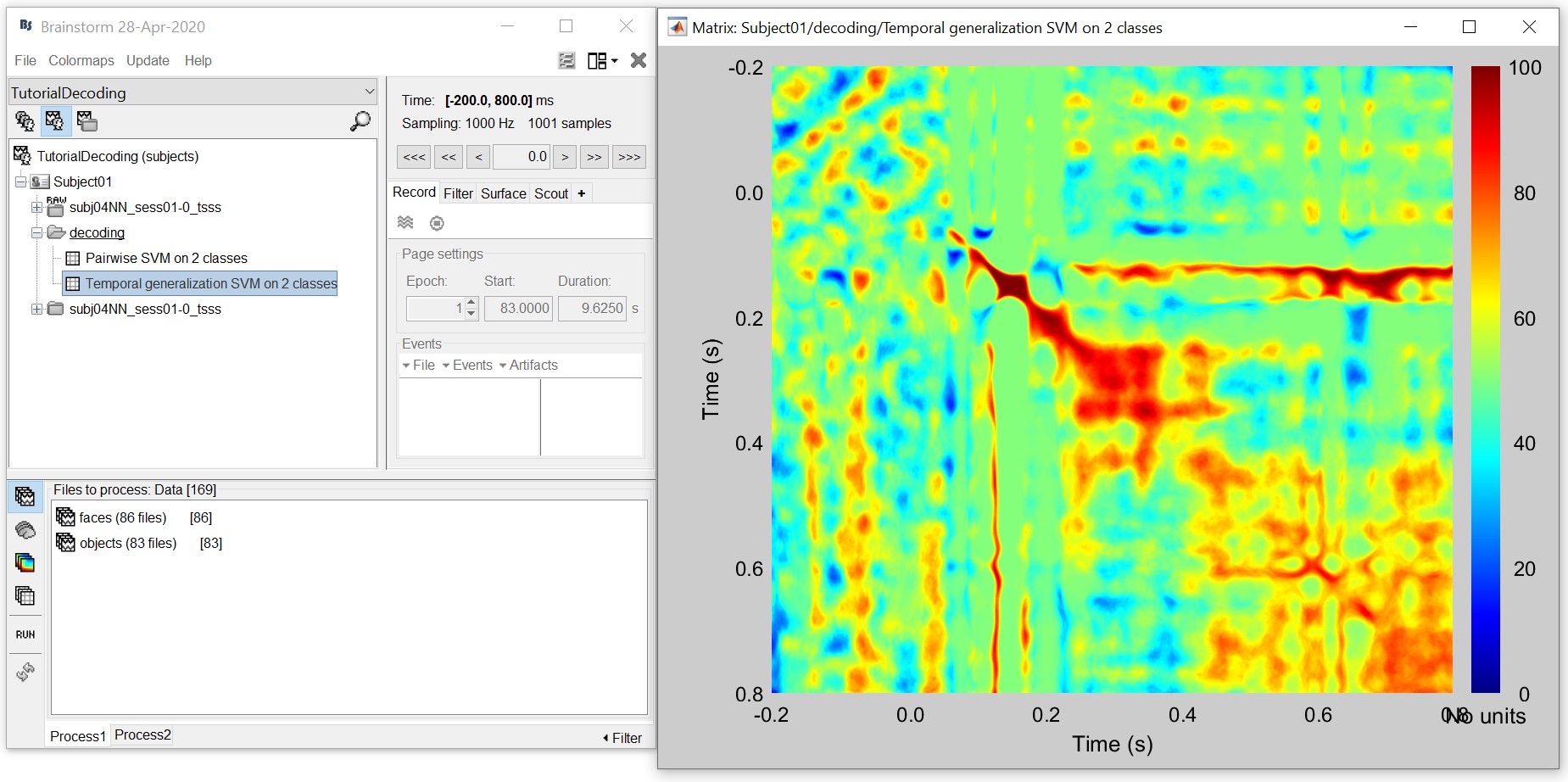

- For temporal generalization, repeat the above process but select 'Temporal Generalization'.

To evaluate the persistence of neural representations over time, the decoding procedure can be generalized across time by training the SVM classifier at a given time point t, as before, but testing across all other time points (Cichy et al., 2014; King and Dehaene, 2014; Isik et al., 2014). Intuitively, if representations are stable over time, the classifier should successfully discriminate signals not only at the trained time t, but also over extended periods of time.

The process will take some time. The results are then saved in a file in the 'decoding' folder

- This representation gives us a decoding (approx. symmetric) matrix highlighting time periods that are similar in neural representation in red. For example, early signals up until 200ms have a narrow diagonal, indicating highly transient visual representations. On the other hand, the extended diagonal (red blob) between 200 and 400 ms indicates a stable neural representation at this time. We notice a similar albeit slightly attenuated phenomenon between 400 and 800 ms. In sum, this visualization can help us identify time periods with transient and sustained neural representations.

References

Cichy RM, Pantazis D, Oliva A (2014), Resolving human object recognition in space and time, Nature Neuroscience, 17:455–462.

Guggenmos M, Sterzer P, Cichy RM (2018), Multivariate pattern analysis for MEG: A comparison of dissimilarity measures, NeuroImage, 173:434-447.

King JR, Dehaene S (2014), Characterizing the dynamics of mental representations: the temporal generalization method, Trends in Cognitive Sciences, 18(4): 203-210

Isik L, Meyers EM, Leibo JZ, Poggio T, The dynamics of invariant object recognition in the human visual system, Journal of Neurophysiology, 111(1): 91-102

Additional documentation

Forum: Decoding in source space: http://neuroimage.usc.edu/forums/showthread.php?2719