|

Size: 8137

Comment:

|

Size: 7764

Comment:

|

| Deletions are marked like this. | Additions are marked like this. |

| Line 1: | Line 1: |

| = Decoding conditions = ''Authors: Seyed-Mahdi Khaligh-Razavi, Francois Tadel, Dimitrios Pantazis'' |

= Machine learning: Decoding / MVPA = ''Authors: Dimitrios Pantazis''', '''Seyed-Mahdi Khaligh-Razavi, Francois Tadel, '' |

| Line 4: | Line 4: |

| This tutorial illustrates how to use the functions developed at Aude Oliva's lab (MIT) to run support vector machine (SVM) and linear discriminant analysis (LDA) classification on your MEG data across time. | This tutorial illustrates how to run MEG decoding using support vector machines (SVM). |

| Line 8: | Line 8: |

| <<Include(DatasetDecoding, , from="\<\<HTML\(\<!-- START-PAGE --\>\)\>\>", to="\<\<HTML\(\<!-- STOP-SHORT --\>\)\>\>")>> | == License == To reference this dataset in your publications, please cite Cichy et al. (2014). |

| Line 11: | Line 12: |

| Two methods are offered for the classification of MEG recordings across time: | Two decoding processes are available in Brainstorm: |

| Line 13: | Line 14: |

| * Support vector machine (SVM): * Linear discriminant analysis (LDA): |

* Decoding > Classification with SVD * Decoding > Classification with max-correlation classifier |

| Line 16: | Line 17: |

| These two processes in very similar ways: | These two processes work in a similar way, but they use a different classifier, so only SVD is demonstrated here. |

| Line 18: | Line 19: |

| * '''Input''': the input is the channel data from two conditions (e.g. condA and condB) across time. Number of samples per condition should be the same for both condA and condB. Each of them should at least contain two samples. * '''Output''': the output is a decoding curve across time, showing your decoding accuracy (decoding condA vs. condB) at timepoint 't'. |

* '''Input''': the input is the channel data from two conditions (e.g. condA and condB) across time. Number of samples per condition do not have to be the same for both condA and condB, but each of them should have enough samples to create k-folds (see parameter below). * '''Output''': the output is a decoding time course, or a temporal generalization matrix (train time x test time). * '''Classifier''': Two methods are offered for the classification of MEG recordings across time: support vector machine (SVM) and max-correlation classifier. |

| Line 21: | Line 23: |

| In the context of this tutorial, we have two condition types: faces, and scenes. We want to decode faces vs. scenes using 306 MEG channels. In the data, the faces are named as condition ‘201’; and the scenes are named as condition ‘203’. | In the context of this tutorial, we have two condition types: faces, and objects. We want to decode faces vs. objects using 306 MEG channels. |

| Line 24: | Line 26: |

| * Go to the [[http://neuroimage.usc.edu/brainstorm3_register/download.php|Download]] page of this website, and download the file: '''sample_decoding.zip ''' * Unzip it in a folder that is not in any of the Brainstorm folders (program folder or database folder). This is really important that you always keep your original data files in a separate folder: the program folder can be deleted when updating the software, and the contents of the database folder is supposed to be manipulated only by the program itself. |

* From the [[http://neuroimage.usc.edu/bst/download.php|Download]] page of this website, download the file: '''subj04NN_sess01-0_tsss.fif''' |

| Line 30: | Line 31: |

| * "'''No, use one channel file per condition'''". <<BR>><<BR>> {{attachment:decoding_protocol.gif||height="370",width="344"}} | * "'''No, use one channel file per condition'''". <<BR>><<BR>> {{attachment:1_create_new_protocol.jpg||width="400"}} |

| Line 35: | Line 36: |

| * Right click on the subject node (Subject01) > '''Review raw file'''''.'' <<BR>>Select the file format: "'''MEG/EEG: Neuromag FIFF (*.fif)'''"<<BR>>Select the file: sample_decoding/'''mem6-0_tsss_mc.fif''' <<BR>><<BR>>{{attachment:decoding_link.gif}} * Select "Event channels" to read the triggers from the stimulus channel. <<BR>><<BR>> {{attachment:3_fif.png||height="146",width="339"}} * Right-click on the "Link to raw file" > '''Import in database'''. |

* Right click on the subject node (Subject01) > '''Review raw file'''''.'' <<BR>>Select the file format: "'''MEG/EEG: Neuromag FIFF (*.fif)'''"<<BR>>Select the file: sample_decoding/'''mem6-0_tsss_mc.fif''' <<BR>> {{attachment:2_review_raw_file.jpg||width="440"}} <<BR>><<BR>> {{attachment:2_review_raw_file2.jpg||width="440"}} <<BR>><<BR>> * Select "Event channels" to read the triggers from the stimulus channel. <<BR>><<BR>> {{attachment:3_event_channel.jpg||width="320"}} * We will not pay attention to MEG/MRI registration because we are not going to compute any source models. The decoding is done on the sensor data. * Double click on the 'Link to raw file' to visualize the raw recordings. Event codes 13-24 indicate responses to face images, and we will combine them to a single group called 'faces'. To do so, select events 13-24 and from the menu select Events->Duplicate groups. Then select Events->Merge groups. * The event codes are duplicated first so we do not lose the original 13-24 event codes. {{attachment:4_duplicate_groups_faces.jpg||width="500"}} <<BR>><<BR>> {{attachment:5_merge_groups_faces.jpg||width="500"}} * Event codes 49-60 indicate responses to object images, and we will combine them to a single group called 'objects'. To do so, select events 49-60 and from the menu select Events->Duplicate groups. Then select Events->Merge groups. No screenshots are shown since this is similar to above. * We will now import the 'faces' and 'objects' responses to the database. Select File->'''Import in database'''. <<BR>><<BR>> . {{attachment:10_import_in_database.jpg||width="500"}} <<BR>><<BR>> * Select only two events: 'faces' and 'objects' * Epoch time: [-200, 800] ms * Remove DC offset: Time range: [-200, 0] ms * Do not create separate folders for each event type |

| Line 39: | Line 50: |

| <<BR>><<BR>> | {{attachment:11_import_in_database_window.jpg||width="500"}} |

| Line 41: | Line 52: |

| == Import MEG data == 1. Set epoch time to [-100, 1000] 1. Time range: [-100,0] 1. From the events select only these two events: 201 (faces), and 203(scenes) <<BR>> <<BR>> {{attachment:4_importfif.png}} <<BR>><<BR>> 1. Then press import. You will get a message saying ‘some epochs are shorter than the others..’ . Press yes. |

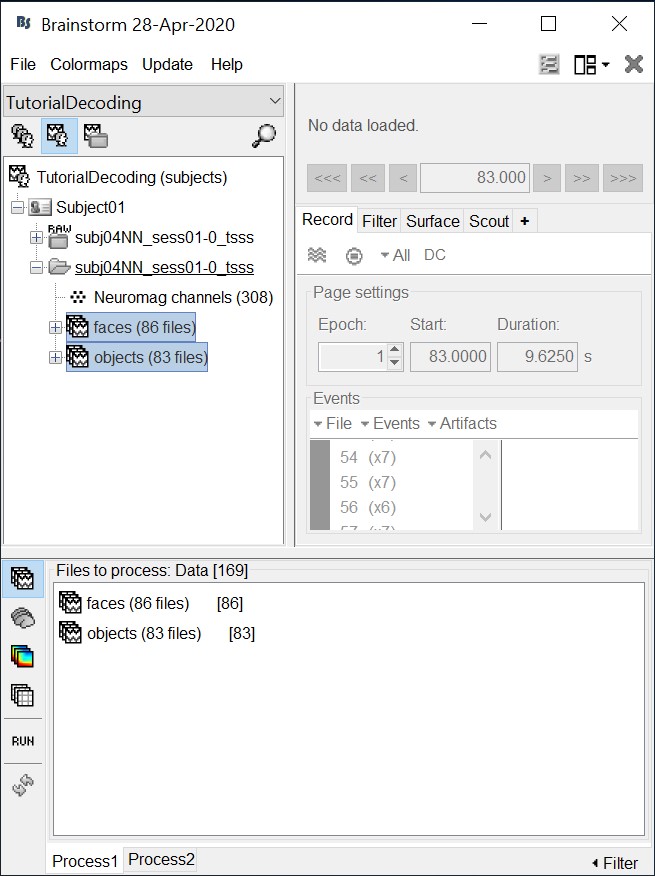

== Select files == * Drag and drop all the face and object trials to the Process1 tab at the bottom of the Brainstorm window. |

| Line 47: | Line 55: |

| == Decoding conditions == 1. Select ‘Process2’ from the bottom brainstorm window. 1. Drag and drop 40 files from folder ‘201’ to ‘Files A’; and 40 files from folder ‘203’ to ‘Files B’. You can select more than 40 or less. The important thing is that both ‘A’ and ‘B’ should have the same number of files. <<BR>><<BR>> {{attachment:5_dragdrop.png}} <<BR>><<BR>> |

{{attachment:12_select_files.jpg||width="500"}} |

| Line 51: | Line 57: |

| === a) Cross-validation === | == Cross-validation == |

| Line 54: | Line 60: |

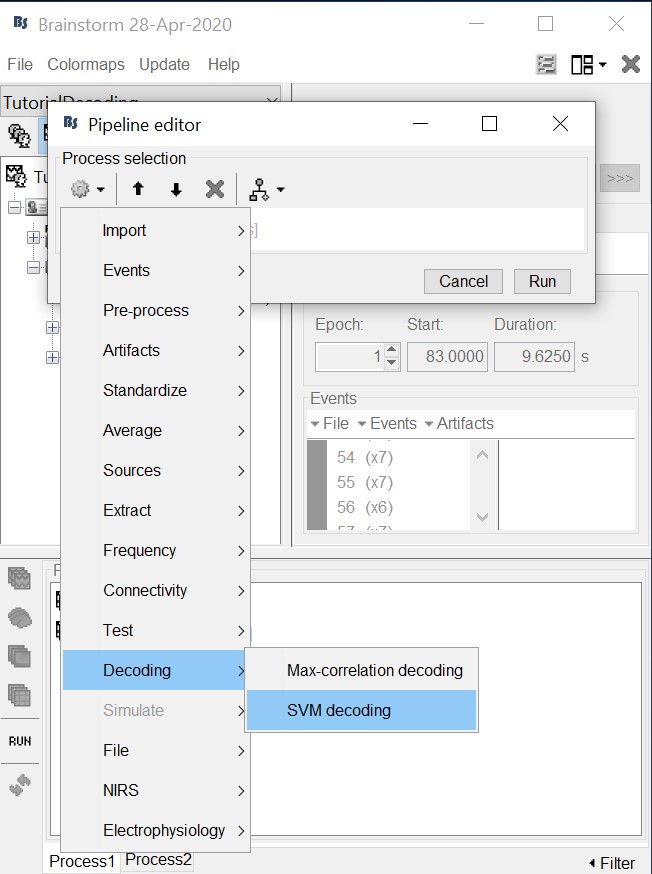

| 1. Select run -> decoding conditions -> classification with cross validation <<BR>><<BR>> {{attachment:6_decodingcrossval.png}} <<BR>><<BR>> Here, you will have three choices for cross-validation. If you have Matlab Statistics and Machine Learning Toolbox, you can use ‘Matlab SVM’ or ‘Matlab LDA’. You can also install the ‘LibSVM’ toolbox (https://www.csie.ntu.edu.tw/~cjlin/libsvm/ ); and addpath it to your Matlab session. LibSVM may be faster. The LibSVM cross-validation won’t be stratified. However, if you select Matlab SVM/LDA, it will do a k-fold stratified cross-validation for you, meaning that each fold will contain the same proportions of the two types of class labels. | * Select process "'''Decoding > Classification with cross-validation'''": <<BR>><<BR>> {{attachment:13_pipeline_editor_select_decoding.jpg||width="500"}} * * * * '''Low-pass cutoff frequency''': If set, it will apply a low-pass filter to all the input recordings. * '''Matlab SVM/LDA''': Require Matlab's Statistics and Machine Learning Toolbox. <<BR>>These methods do a k-fold stratified cross-validation for you, meaning that each fold will contain the same proportions of the two types of class labels (option "Number of folds"). * '''LibSVM''': Requires the LibSVM toolbox ([[http://www.csie.ntu.edu.tw/~cjlin/libsvm/#download|download]] and add to your path).<<BR>>The LibSVM cross-validation may be faster but will not be stratified. |

| Line 56: | Line 67: |

| You can also set the number of folds for cross-validation; and the cut-off frequency for low-pass filtering – this is to smooth your data. | * The process will take some time. The results are then saved in a file in the new decoding folder. <<BR>><<BR>> {{attachment:cv_file.gif||height="167",width="268"}} * If you double click on it you will see a decoding curve across time. <<BR>><<BR>> {{attachment:cv_plot.gif||height="172",width="355"}} |

| Line 58: | Line 70: |

| . 2- To continue, set the values as shown below: <<BR>><<BR>> {{attachment:7_crossvaloptions.png}} <<BR>><<BR>> 3- The process will take some time.The results are then saved in a file (‘Matlab SVM Decoding_201_203’) under the new ‘decoding’ node. If you double click on it you will see a decoding curve across time (shown below). <<BR>><<BR>> {{attachment:8_crossvalres1.png}} <<BR>><<BR>> {{attachment:9_crossvalres2.png}} <<BR>><<BR>> The internal brainstorm plot is not perfect for this purpose. To make a proper plot you can plot the results yourself. Right click on the result file (Matlab SVM Decoding_201_203), select 'export to Matlab' from the menu. Give it a name ‘decodingcurve’. Then using Matlab plot function you can plot the decoding accuracies across time. ''<<BR>>'' ''Matlab code: <<BR>>'' * ''figure; '' * ''plot(decodingcurve.Value);'' <<BR>><<BR>> {{attachment:10_crossvalres3.png}} <<BR>><<BR>> === b) Permutation === |

== Permutation == |

| Line 74: | Line 73: |

| 1. Select run -> decoding conditions -> classification with permutation 1. Set the values as the following: |

* Select process "'''Decoding > Classification with cross-validation'''". Set options as below: <<BR>><<BR>> {{attachment:perm_process.gif||height="366",width="311"}} * '''Trial bin size''': If greater than 1, the training data will be randomly grouped into bins of the size you determine here. The samples within each bin are then averaged (we refer to this as sub-averaging); the classifier is then trained using the averaged samples. For example, if you have 40 faces and 40 scenes, and you set the trial bin size to 5; then for each condition you will have 8 bins each containing 5 samples. Seven bins from each condition will be used for training, and the two left-out bins (one face bin, one scene bin) will be used for testing the classifier performance. * The results are saved in a file in the new decoding folder. <<BR>><<BR>> {{attachment:perm_file.gif||height="142",width="284"}} * Right-click > Display as time series (or double click). <<BR>><<BR>> {{attachment:perm_plot.gif||height="169",width="346"}} |

| Line 77: | Line 78: |

| <<BR>><<BR>> {{attachment:11_permoptions.png}} <<BR>><<BR>> If the ‘Trial bin size’ is greater than 1, the training data will be randomly grouped into bins of the size you determine here. The samples within each bin are then averaged (we refer to this as sub-averaging); the classifier is then trained using the averaged samples. For example, if you have 40 faces and 40 scenes, and you set the trial bin size to 5; then for each condition you will have 8 bins each containing 5 samples. Seven bins from each condition will be used for training, and the two left-out bins (one face bin, one scene bin) will be used for testing the classifier performance. . 3- The results are saved under the ‘decoding ’ node . If you selected ‘Matlab SVM’, the file name will be ‘Matlab SVM Decoding-permutation_201_203’ . Double click on it. You will get the plot blow: <<BR>><<BR>> {{attachment:12_permres1.png}} <<BR>><<BR>> This might not be very intuitive. You can export the decoding results into Matlab and plot it yourself. If you export the decoding results into Matlab, the imported structure will have two important fields: * a) Value: this is the mean decoding accuracy across all permutations * b) Std: this is the standard deviation across all permutations. If you plot the mean value (decodingcurve.Value), below is what you will get. You also have access to the standard deviation (decodingcurve.Std), in case you want to plot it. <<BR>><<BR>> {{attachment:13_permres2.png}} <<BR>><<BR>> |

== Acknowledgment == This work was supported by the ''McGovern Institute Neurotechnology Program'' to PIs: Aude Oliva and Dimitrios Pantazis. ''http://mcgovern.mit.edu/technology/neurotechnology-program'' |

| Line 95: | Line 82: |

| 1. Khaligh-Razavi, S-M; Bainbridge, W; Pantazis, D; and Oliva, A. (2015) Introducing Brain Memorability: an MEG study (in preparation). 1. Cichy, R.M., Pantazis, D., and Oliva, A. (2014). Resolving human object recognition in space and time. Nat Neurosci 17, 455–462. |

1. Khaligh-Razavi SM, Bainbridge W, Pantazis D, Oliva A (2016)<<BR>>[[http://biorxiv.org/content/early/2016/04/22/049700.abstract|From what we perceive to what we remember: Characterizing representational dynamics of visual memorability]]. ''bioRxiv'', 049700. 1. Cichy RM, Pantazis D, Oliva A (2014)<<BR>>[[http://www.nature.com/neuro/journal/v17/n3/full/nn.3635.html|Resolving human object recognition in space and time]], Nature Neuroscience, 17:455–462. == Additional documentation == * Forum: Decoding in source space: http://neuroimage.usc.edu/forums/showthread.php?2719 |

Machine learning: Decoding / MVPA

Authors: Dimitrios Pantazis, Seyed-Mahdi Khaligh-Razavi, Francois Tadel,

This tutorial illustrates how to run MEG decoding using support vector machines (SVM).

Contents

License

To reference this dataset in your publications, please cite Cichy et al. (2014).

Description of the decoding functions

Two decoding processes are available in Brainstorm:

Decoding > Classification with SVD

Decoding > Classification with max-correlation classifier

These two processes work in a similar way, but they use a different classifier, so only SVD is demonstrated here.

Input: the input is the channel data from two conditions (e.g. condA and condB) across time. Number of samples per condition do not have to be the same for both condA and condB, but each of them should have enough samples to create k-folds (see parameter below).

Output: the output is a decoding time course, or a temporal generalization matrix (train time x test time).

Classifier: Two methods are offered for the classification of MEG recordings across time: support vector machine (SVM) and max-correlation classifier.

In the context of this tutorial, we have two condition types: faces, and objects. We want to decode faces vs. objects using 306 MEG channels.

Download and installation

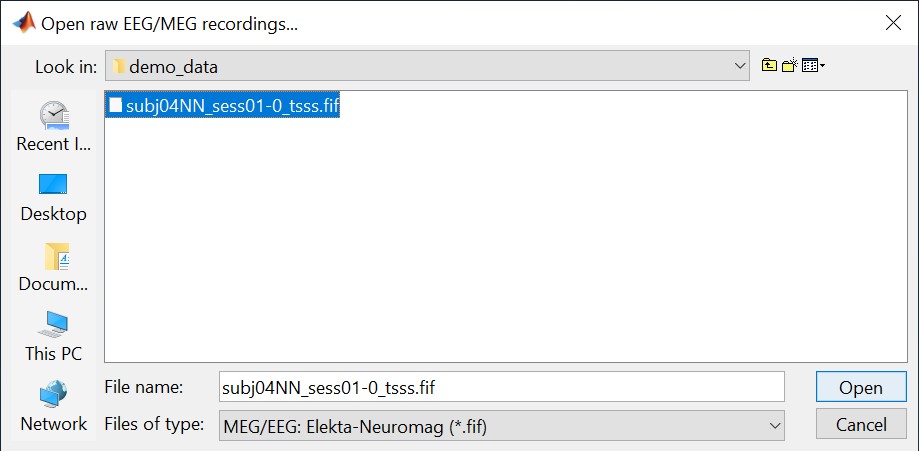

From the Download page of this website, download the file: subj04NN_sess01-0_tsss.fif

- Start Brainstorm (Matlab scripts or stand-alone version).

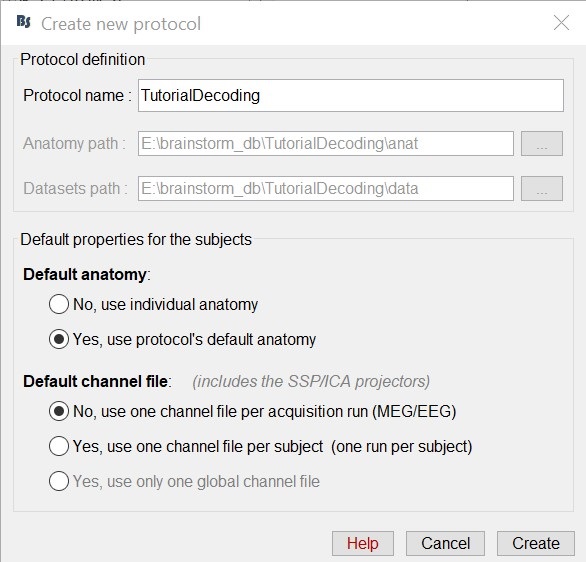

Select the menu File > Create new protocol. Name it "TutorialDecoding" and select the options:

"Yes, use protocol's default anatomy",

"No, use one channel file per condition".

Import the recordings

- Go to the "functional data" view (sorted by subjects).

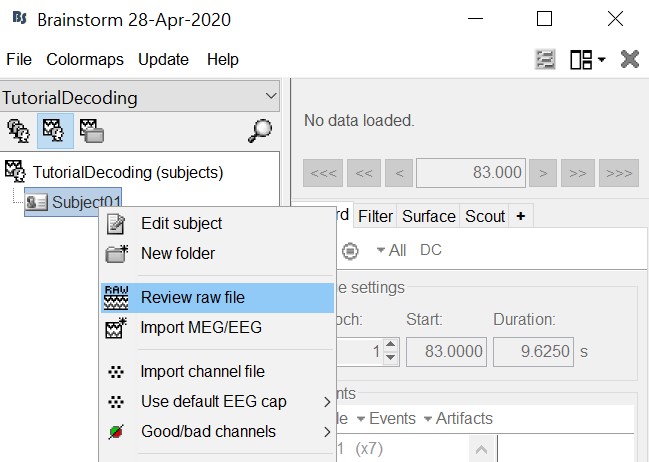

Right-click on the TutorialDecoding folder > New subject > Subject01

Leave the default options you defined for the protocol.Right click on the subject node (Subject01) > Review raw file.

Select the file format: "MEG/EEG: Neuromag FIFF (*.fif)"

Select the file: sample_decoding/mem6-0_tsss_mc.fif

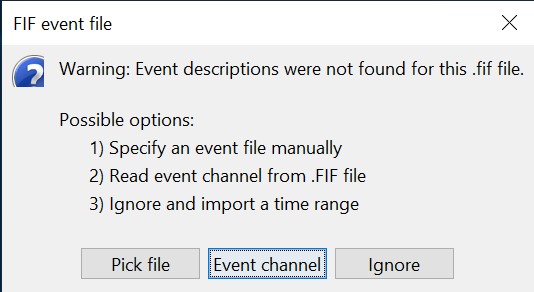

Select "Event channels" to read the triggers from the stimulus channel.

- We will not pay attention to MEG/MRI registration because we are not going to compute any source models. The decoding is done on the sensor data.

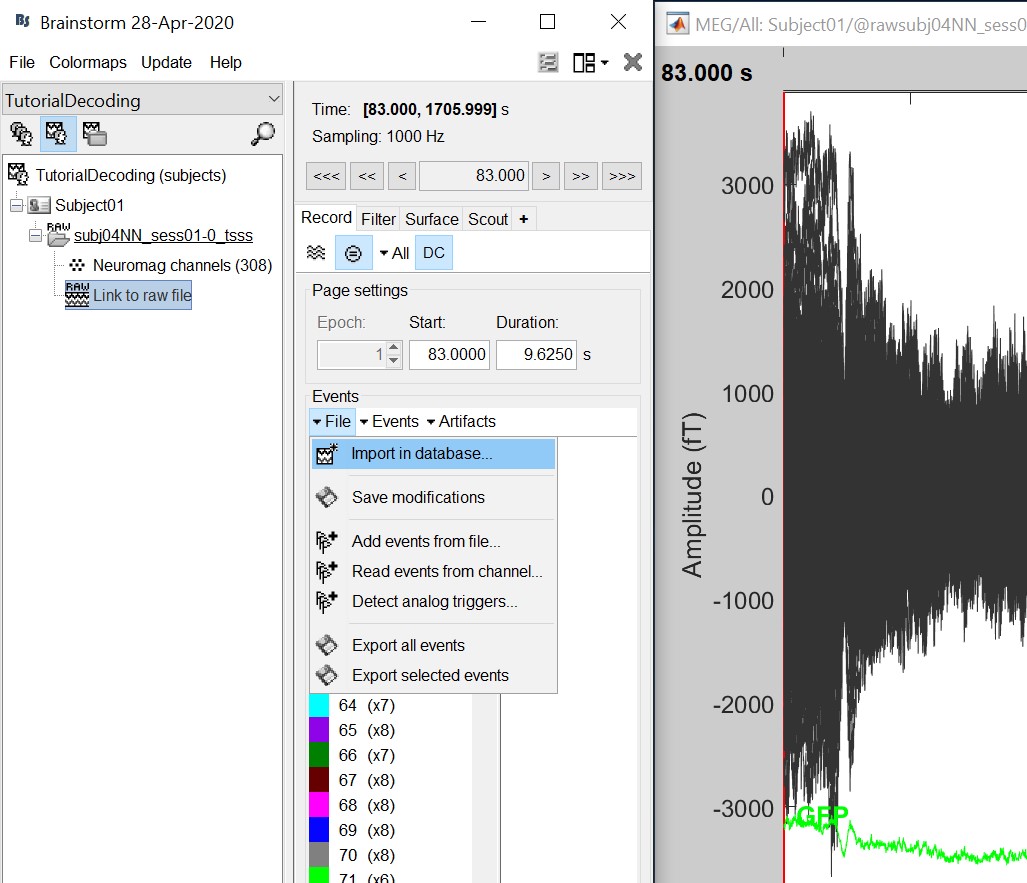

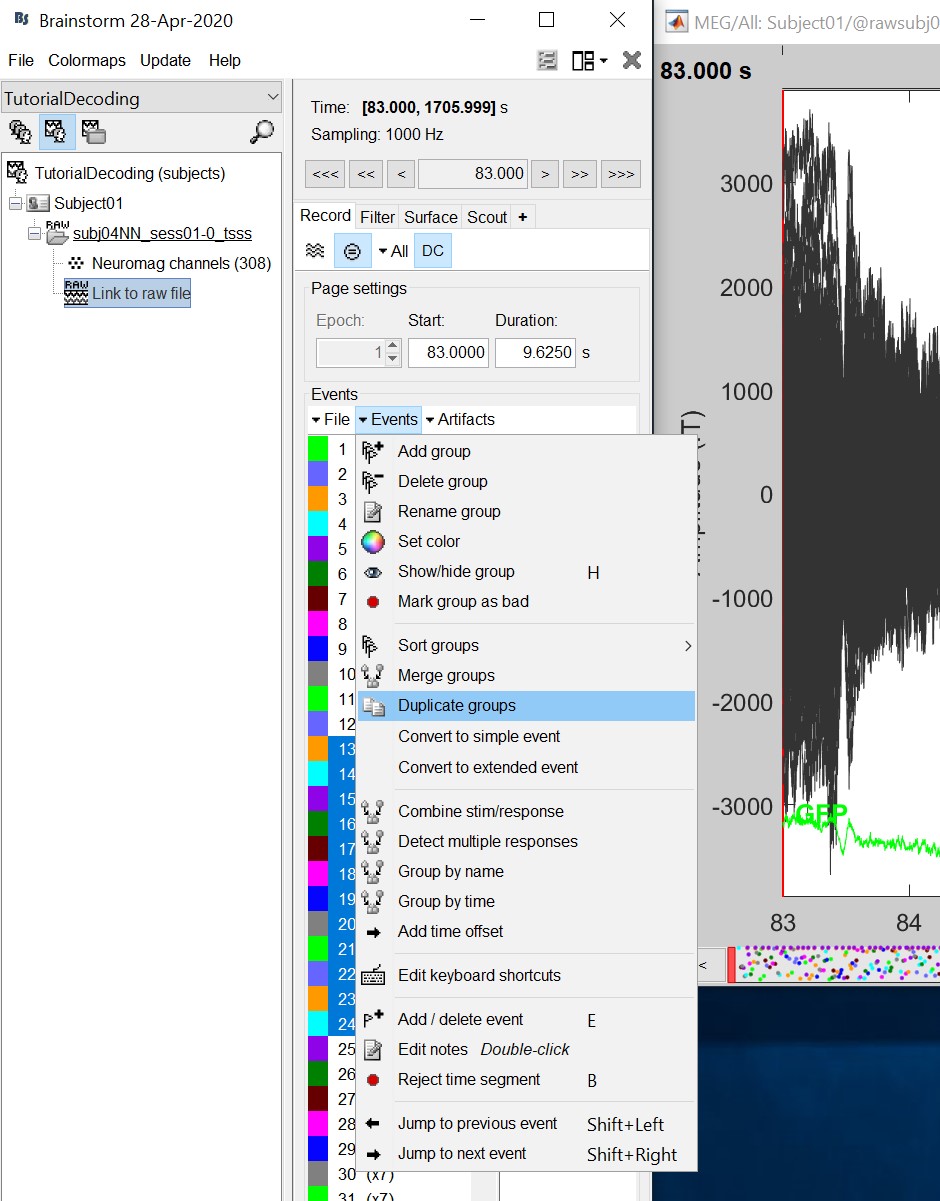

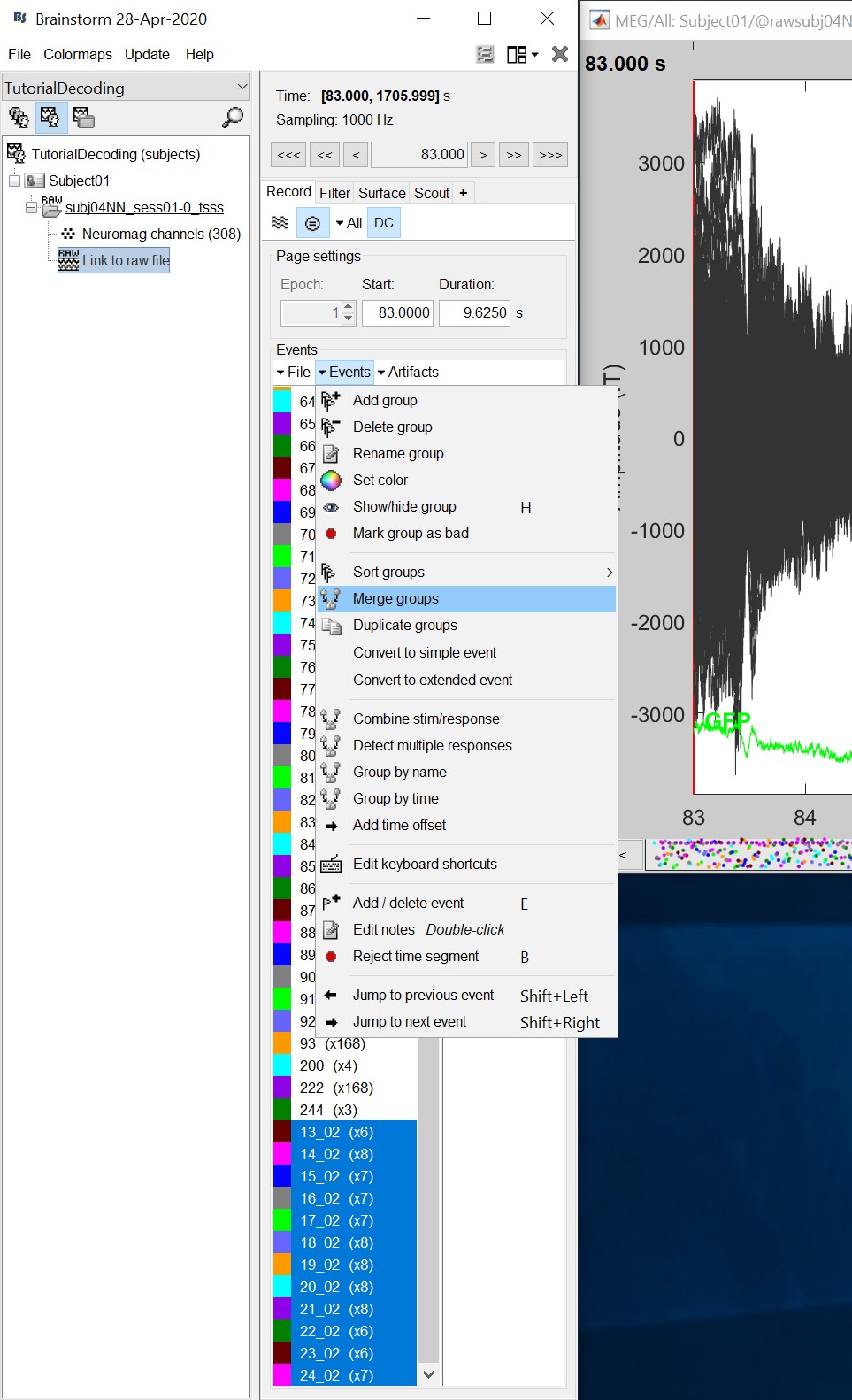

Double click on the 'Link to raw file' to visualize the raw recordings. Event codes 13-24 indicate responses to face images, and we will combine them to a single group called 'faces'. To do so, select events 13-24 and from the menu select Events->Duplicate groups. Then select Events->Merge groups.

- The event codes are duplicated first so we do not lose the original 13-24 event codes.

Event codes 49-60 indicate responses to object images, and we will combine them to a single group called 'objects'. To do so, select events 49-60 and from the menu select Events->Duplicate groups. Then select Events->Merge groups. No screenshots are shown since this is similar to above.

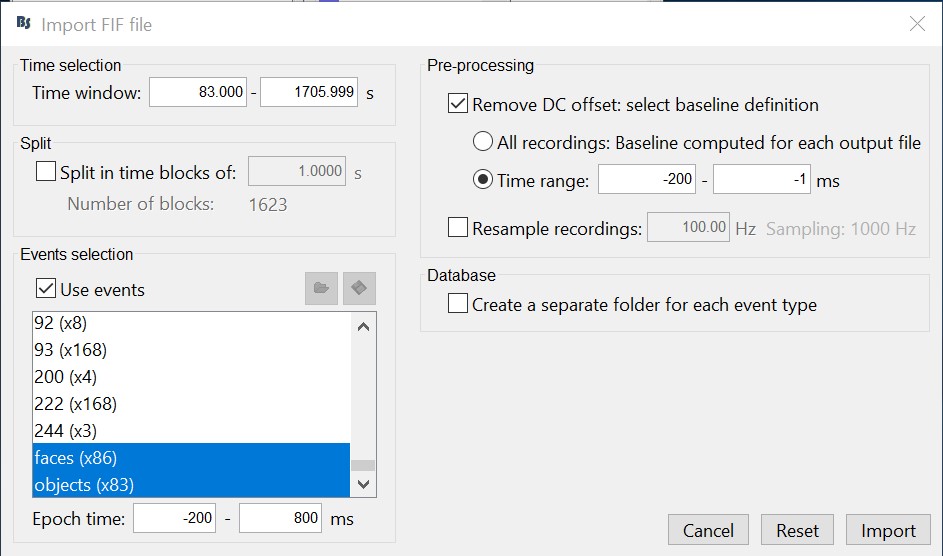

We will now import the 'faces' and 'objects' responses to the database. Select File->Import in database.

- Select only two events: 'faces' and 'objects'

- Epoch time: [-200, 800] ms

- Remove DC offset: Time range: [-200, 0] ms

- Do not create separate folders for each event type

Select files

- Drag and drop all the face and object trials to the Process1 tab at the bottom of the Brainstorm window.

Cross-validation

Cross-validation is a model validation technique for assessing how the results of our decoding analysis will generalize to an independent data set.

Select process "Decoding > Classification with cross-validation":

* Low-pass cutoff frequency: If set, it will apply a low-pass filter to all the input recordings.

Matlab SVM/LDA: Require Matlab's Statistics and Machine Learning Toolbox.

These methods do a k-fold stratified cross-validation for you, meaning that each fold will contain the same proportions of the two types of class labels (option "Number of folds").LibSVM: Requires the LibSVM toolbox (download and add to your path).

The LibSVM cross-validation may be faster but will not be stratified.

The process will take some time. The results are then saved in a file in the new decoding folder.

If you double click on it you will see a decoding curve across time.

Permutation

This is an iterative procedure. The training and test data for the SVM/LDA classifier are selected in each iteration by randomly permuting the samples and grouping them into bins of size n (you can select the trial bin sizes). In each iteration two samples (one from each condition) are left out for test. The rest of the data are used to train the classifier with.

Select process "Decoding > Classification with cross-validation". Set options as below:

Trial bin size: If greater than 1, the training data will be randomly grouped into bins of the size you determine here. The samples within each bin are then averaged (we refer to this as sub-averaging); the classifier is then trained using the averaged samples. For example, if you have 40 faces and 40 scenes, and you set the trial bin size to 5; then for each condition you will have 8 bins each containing 5 samples. Seven bins from each condition will be used for training, and the two left-out bins (one face bin, one scene bin) will be used for testing the classifier performance.

The results are saved in a file in the new decoding folder.

Right-click > Display as time series (or double click).

Acknowledgment

This work was supported by the McGovern Institute Neurotechnology Program to PIs: Aude Oliva and Dimitrios Pantazis. http://mcgovern.mit.edu/technology/neurotechnology-program

References

Khaligh-Razavi SM, Bainbridge W, Pantazis D, Oliva A (2016)

From what we perceive to what we remember: Characterizing representational dynamics of visual memorability. bioRxiv, 049700.Cichy RM, Pantazis D, Oliva A (2014)

Resolving human object recognition in space and time, Nature Neuroscience, 17:455–462.

Additional documentation

Forum: Decoding in source space: http://neuroimage.usc.edu/forums/showthread.php?2719